Rapid development of Interactive Content

By Mario A. Martínez Latorre

2021-06-10

A better approach to rapid prototyping of 3D scenes

From the initial conception to its final functional version, the development of Interactive Contents for informative, training or recreational uses, involves a great deal of work corresponding to different areas in which, at VÓRTICE, we are specialists.

Thus, we can broadly identify: the design of the mechanics of the interactive, the graphic and sound resources, and the implementation through software development.

It is with regard to the software where, without a doubt, it would be great to have some kind of agile development tool, with which to obtain fully functional versions in the shortest period of time.

A visual and intuitive tool, which would allow to avoid to a greater or lesser extent the tedious work of entering code, at least for the implementation of the most basic and repetitive aspects of development.

This is where we are going to look at Reality Composer.

We are not very into Apple, but we are in love with Reality Composer

Reality Composer is one of the applications that are part of Apple's Augmented Reality "suite", along with Reality Converter, the USDZ Tools and XCode (12+) itself.

Specifically, it is oriented to the construction, testing and refinement of interactive experiences, which can then be taken, if desired, to the AR field through the ARKit software engine; functionality also implemented in this great application, available in both desktop and mobile versions.

The operation of this tool is eminently visual, with an intuitive user interface (UI) and well balanced in its handling (UX), which allows the creation of 3D scenes, both in terms of visual and sound content, as well as events and allowed behaviors.

It is thanks to the latter (events and behaviors), that this rapid prototyping tool avoids certain programming needs (always with limits and expected improvements), as we will explain later in this blog post.

USDZ is not a currency

In order to build scenes, in Reality Composer we will work with different 3D elements, which can be either geometric primitives (cubes, spheres, cones, ...) or custom models, which we will have to import by means of USDZ files.

The USD (Universal Scene Definition) format, together with the GLTF (Graphic Library Transmition Format), which we have already discussed in this blog, are the frameworks for the exchange and visualization of three-dimensional graphic data, which have become the de facto standards within the industry. The USDZ version is nothing more than a container package compressed in zip format, without encryption.

The main differences between USD and GLTF lie, on the one hand, in the fact that the former is a private initiative (Pixar - Apple), while the latter is the result of the joint work of organizations and companies (Kronos Group). As a second major point of distinction, in a USD file we can host not only the geometries that make up a scene, its sounds and various animations, but also aspects of its behavior. It could be said that in this way we can host scenes already endowed with the necessary intelligence that allows them to interact.

Reality Composer will allow us to export our scenes to both .usdz and .reality files.

Aliens & Cowboys

At VÓRTICE we firmly believe that there is no better way to explain any process or technology than by means of a fully functional example.

In this way, we will explain the steps for the creation of an interactive experience, which we will also take to Augmented Reality, using the well-known story in which a series of cows, who are peacefully grazing in a meadow, are abducted by a UFO that suddenly appears above them.

Having a storyline will allow us to provide the whole with an attractive and practical approach, working as if it were a real project.

A simple matter of assets

In any case, since this is a non-profit demonstration, we have chosen to use in some cases 3D models made by third parties, resorting to the wonderful online repository SketchFab, under Creative Commons Attribution license:

- the UFO / "ufo" by thundercg9;

- its tractor beam, which is nothing more than a conical 3D primitive with some transparency;

- what we could call abduction spheres / "Sphere" by m.Avramov;

- of course some cows / "Cow NPC" by Owlish Media;

- and 3D texts for different uses.

Also, the soundtrack of the interactive has been generated from incidental sound effects (SFX) and different sound environments. For this purpose, we have used the resources that Reality Composer offers us, in the form of a huge amount of downloadable audio files.

Making a scene

Except in extraordinarily simple or trivial cases, when undertaking any software development it is obviously always necessary to start from a proper planning.

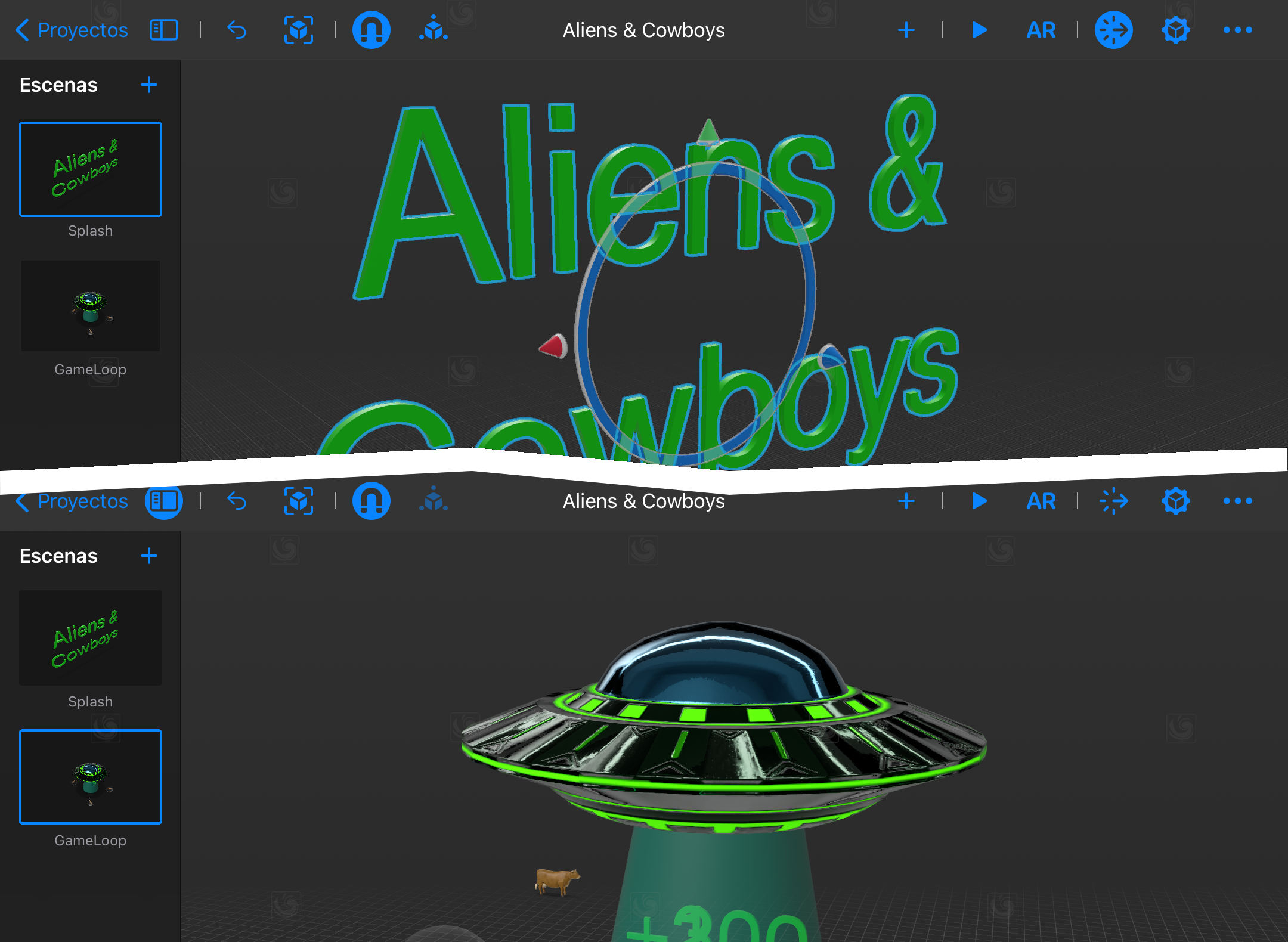

Reality Composer also directs us in a certain way towards these good practices, putting at our disposal the concept of scenes, which we will access through a specific collapsible panel.

In this context, we can consider a scene as the representation of a "screen" or "stage", with a differentiating identity within the set that will configure the interactive experience. The behaviors, which we will see later in detail, will allow us to jump from one scene to another when certain conditions are met.

For "Aliens & Cowboys" we will consider only two scenes: the one that plays the role of start and presentation (usually called "splash") and the one that will host all the functionality, the "game loop" if we want to call it that way.

Always open to other realities

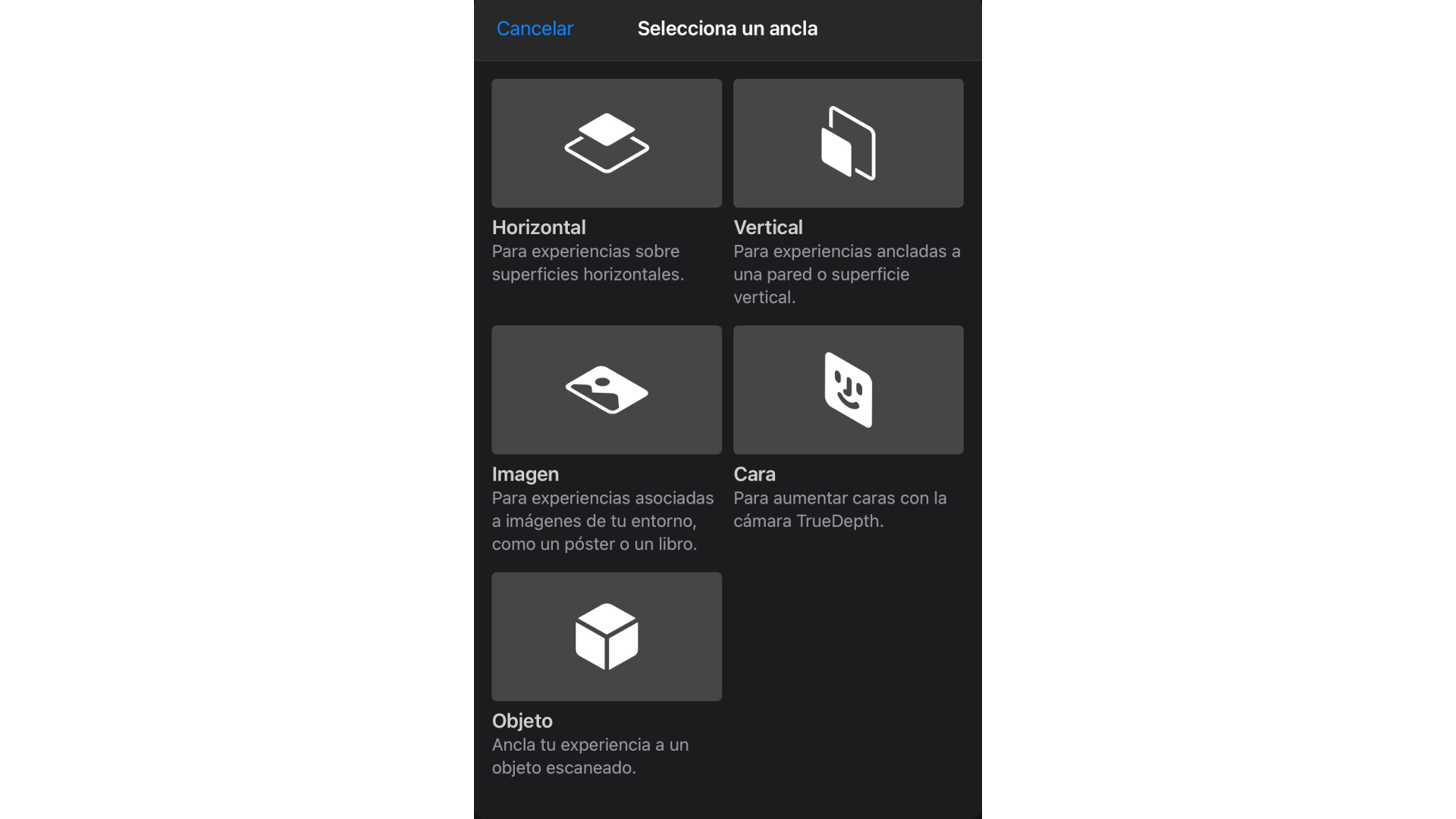

Although Reality Composer allows us to run the interactive we are designing without having to resort to Augmented Reality, for this project we do want to use it, using an eighth-generation iPad with A12 Bionic chip.

Be that as it may, when creating a scene, Reality Composer will always ask us for the type of AR anchor we want to use, being in our case the appropriate one, the horizontal plane.

These AR anchors are logical entities that ensure that both the 3D models and the spatialized sound sources remain in the same position and spatial orientation, thus maintaining the coherence necessary to maintain the illusion that different virtual entities are located in the real world.

Learning to behave

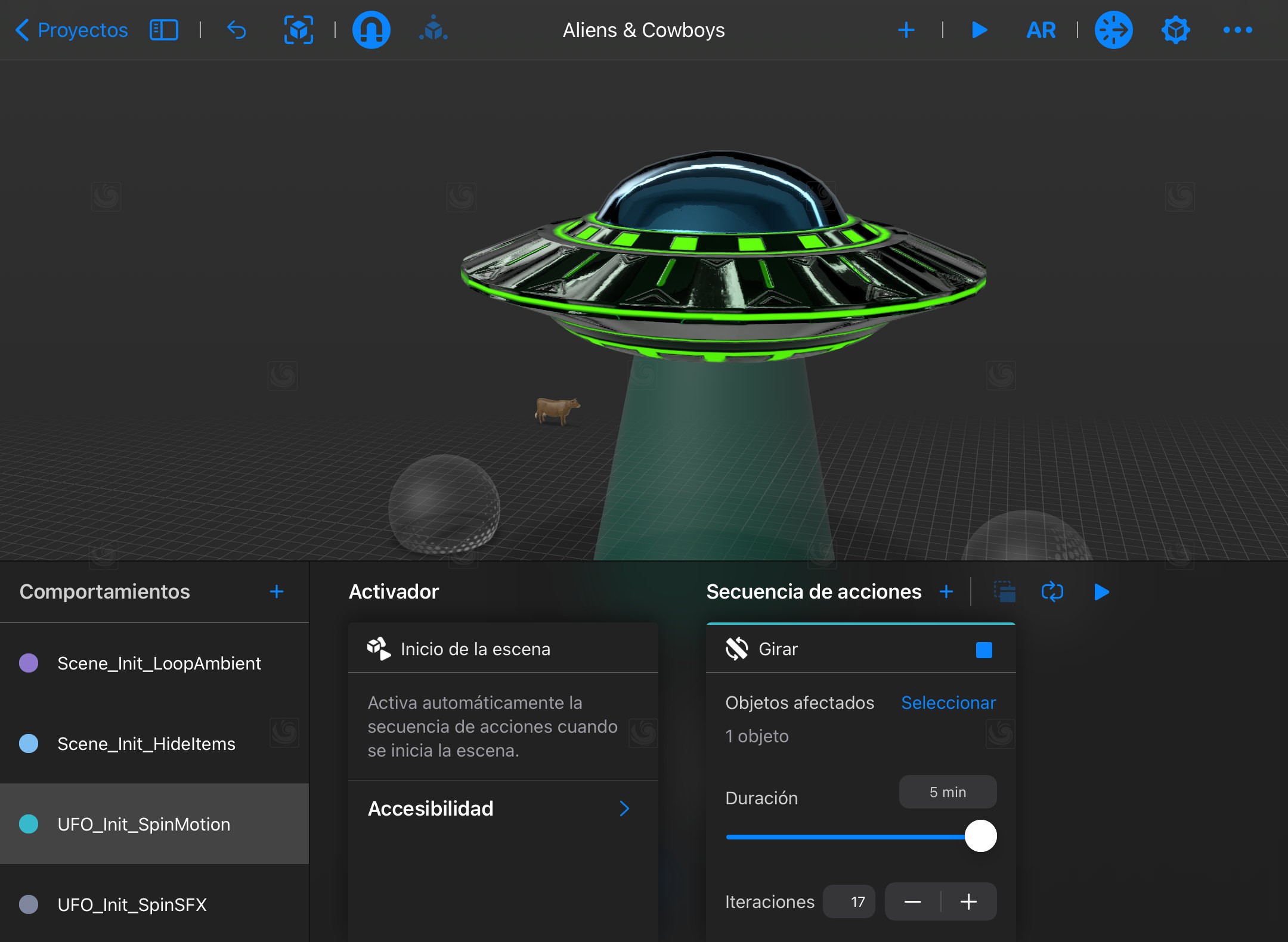

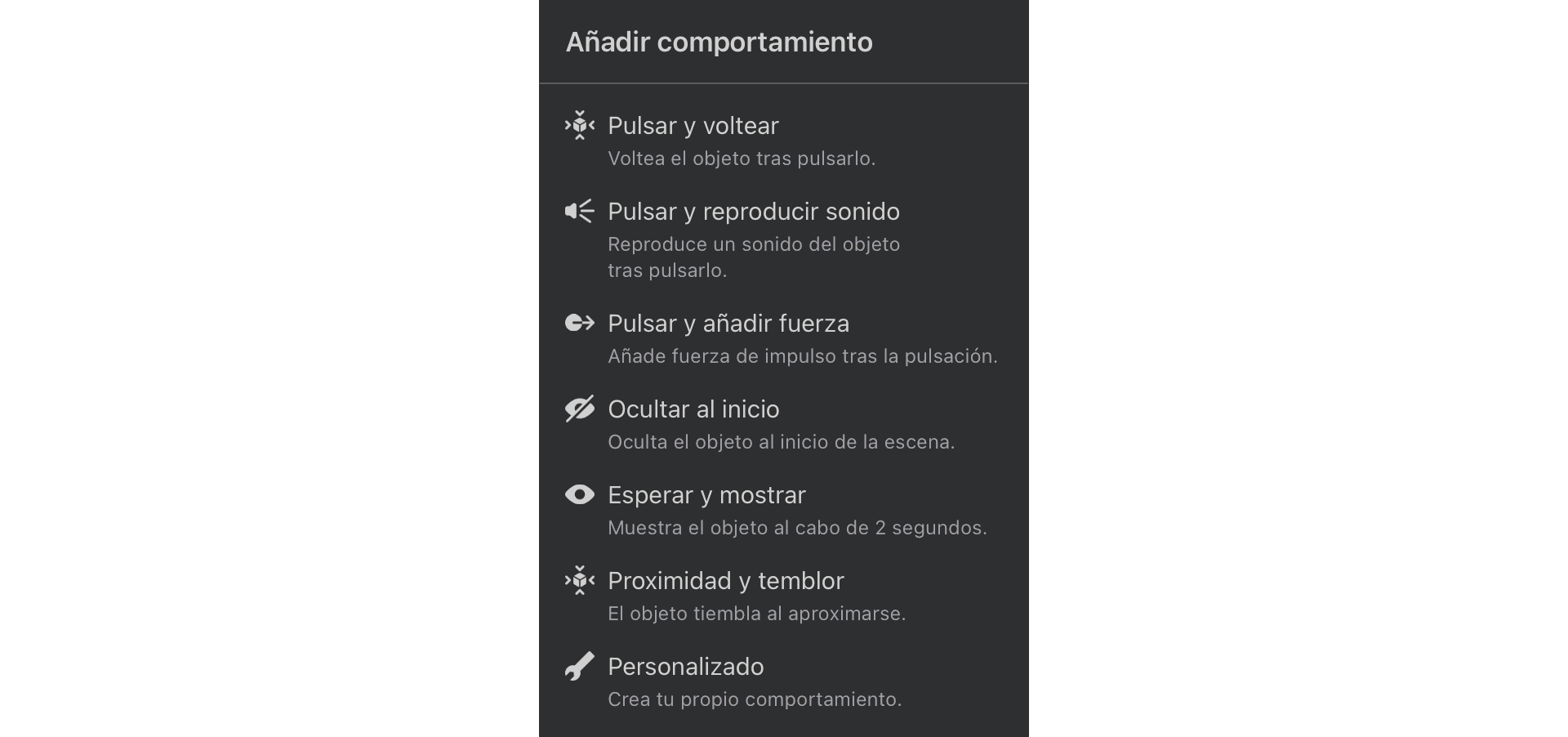

In order to introduce in the interactive the logic necessary for its operation (its programming, to put it more plainly), Reality Composer uses the classic event-driven architecture, through the so-called Behaviors.

These Behaviors will act as event handlers (or listeners if you prefer), which will be triggered when this or that event occurs.

In this sense, Reality Composer puts at our disposal a series of predefined behaviors, giving us also the option to customize them completely from scratch.

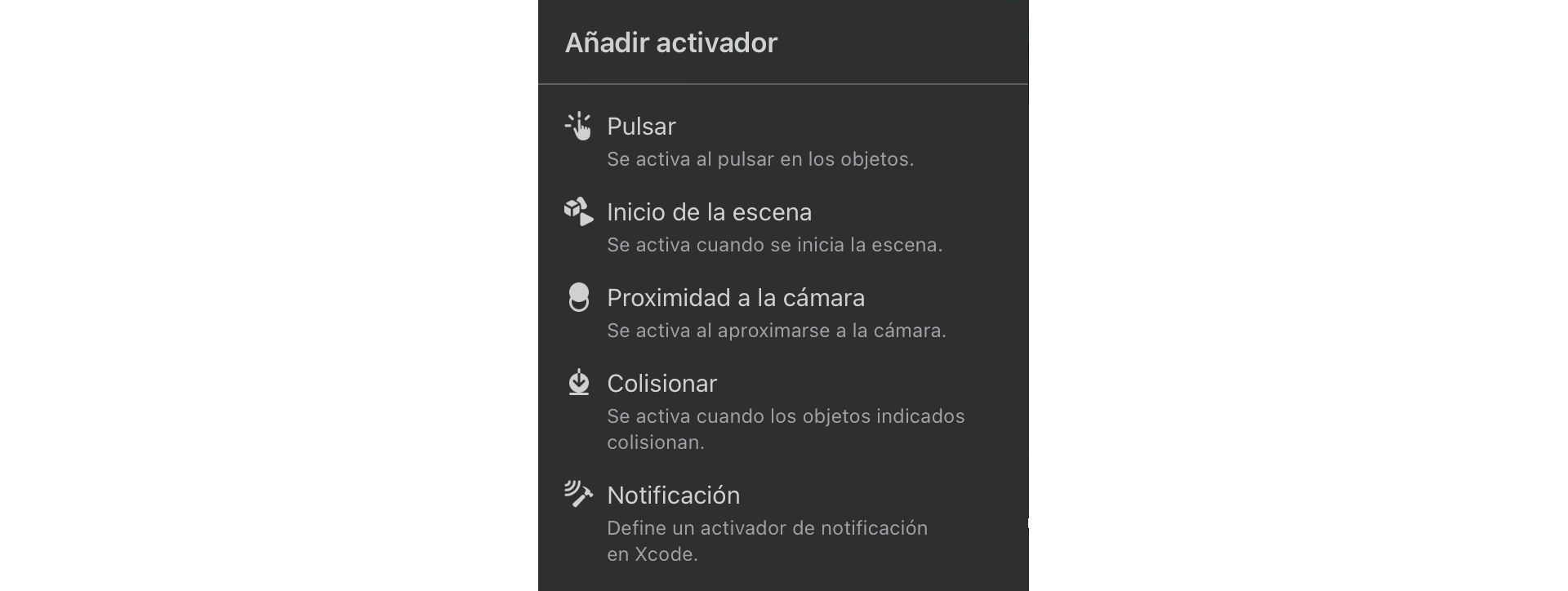

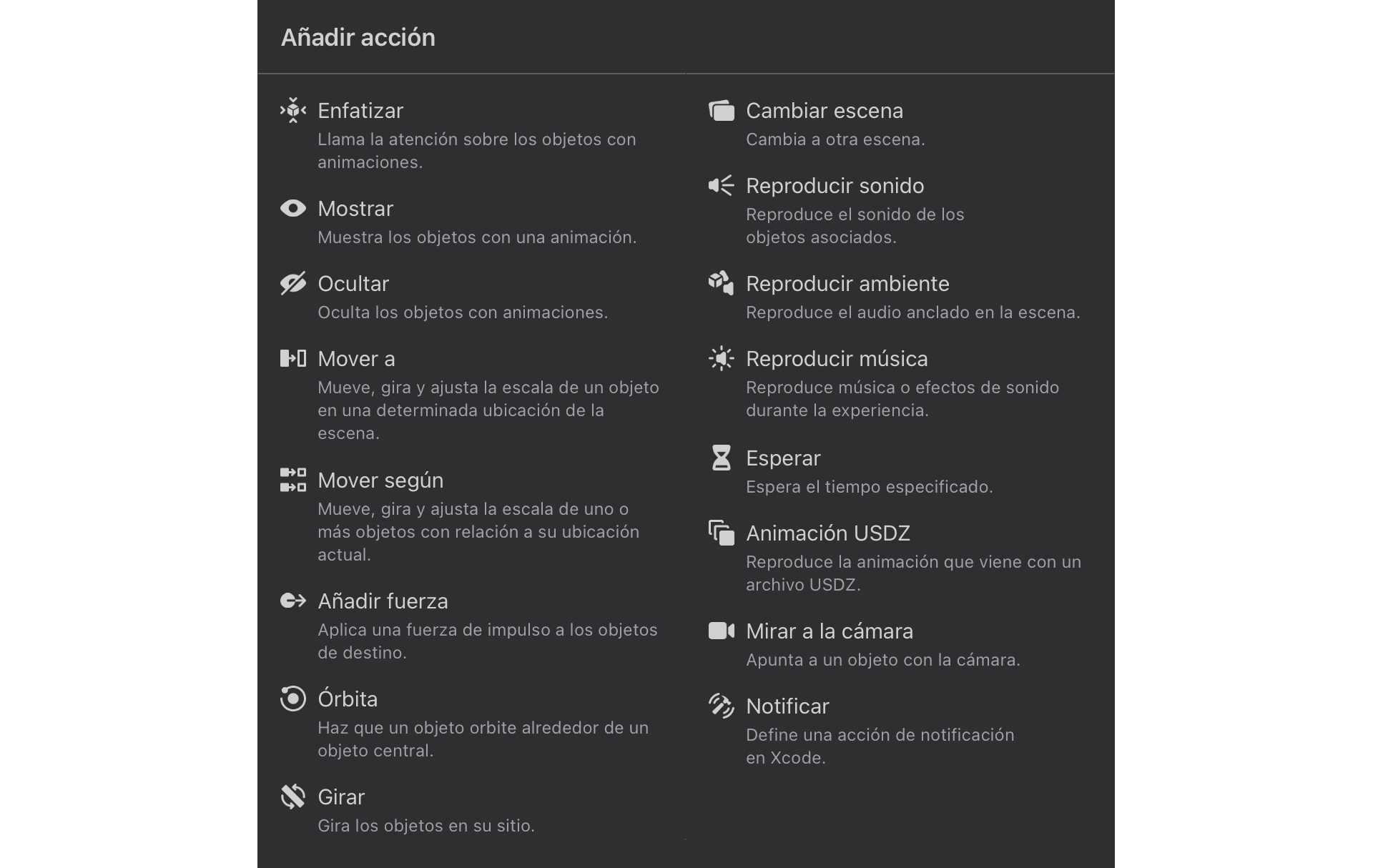

Each Behavior is formed by an Activator (or Trigger), which is the event that initiates it, and the Sequence of Actions that we want to be executed in response.

Considering three "active" cows, the behaviors we have implemented in the case of "Aliens & Cowboys" are:

- Sceme_Init_LoopAmbient

- Scene_Init_HideItems

- UFO_Init_SpinMotion

- UFO_Init_SpinSFX

- Cow01_Abduction

- Cow02_Abduction

- Cow03_Abduction

With such a background, who wouldn't want to take on the role of a little green woman or man and start abducting cows?